Today was a milestone for Nightshift.

When we first launched the company, we built applications to fund our work, early customer engagements we still support. Real products with real users, running on Vercel and GCP.

Harness engineering is a methodology we've fully adopted internally and built nightshift to support this, and we needed to dogfood it. We didn't want to build in a vacuum or demo with toy workloads. We wanted to ground our features and work in actual delivery.

So we tasked an agent running inside a Nightshift chicklet to perform a full application migration from Vercel and GCP to our cluster on AWS EC2 (which we calculated would save us costs), find and fix a critical bug in our ETL pipeline, and ship a significant interactive chat feature that a customer was waiting for. Normally this would be a big ask of Claude Code and require us to hand tool most of the code across several toolchains.

Running inside Nightshift, Claude Code completed this at roughly 1/10th the time and cost of a traditional dev environment. We didn't write a single line of code or CLI command.

I'll digress for a moment: think about where we were before DevOps. Developers wrote code, threw it over the wall to ops. In hindsight, unifying development and operations feels obvious. Shift left is the obvious approach to security, operations, and the SDLC. But at the time, it wasn't obvious at all. The tooling had to catch up, and even after it existed, adoption took years. Harness engineering is the next step. The idea that your primary job as an engineer is to design the architecture, specs, testing chassis, and validation metrics, while the construction of code itself is automated. We think it will feel obvious in a few years. It doesn't yet.

How we did it

The idea of having an AI-SRE is not novel. In fact we drew a lot of our motivation from the project Kagent. The difference in our strategy is we've purpose built Nightshift for primary use of Claude Code and Codex. We start every workflow with dropping a "control plane" Chicklet (the unit of compute inside of Nightshift) which we ssh'd into and used as our orchestrator. Inside that chicklet, we fired up Claude Code and since chicklets come preinstalled with a custom CLAUDE.md that teaches Claude how to interact with the cluster from the inside, Claude knew the APIs for managing other Chicklets.

This concept wasn't new for us. Prior to the Nightshift platform, we were standing up our own telemetry collectors, dev tools, and giving Claude access to them along with the cloud specific CLIs (eg. Kubectl, Vercel CLI, AWS CLI, GCP CLI).

Source: OpenAI Harness Engineering Blog

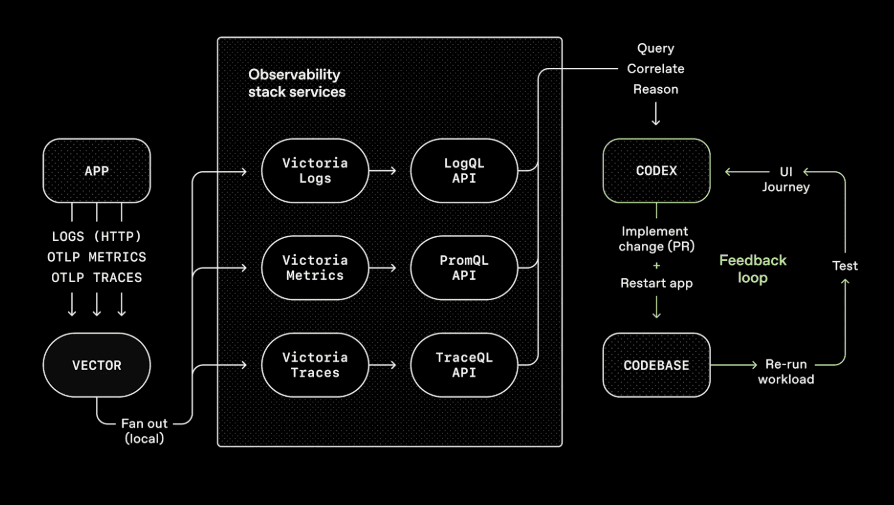

Nightshift is just a purpose built package of this. Give the agent direct access to the infrastructure from inside the cluster and have per service telemetry. Essentially making services the Agent deploys in the cluster automatically instrumented with metrics, logs, and traces and make this data accessible to Claude from any other Chicklet.

With this method Claude created: three backend golang services supporting a NextJS frontend. It used Playwright and Chrome DevTools to debug and test the frontend. When something broke, it could see why immediately, from the same context as the running code.

We think this is the difference between an agent that writes code and an agent that can reliably (and systematically) ship software.

Why This Matters: Harness Engineering at the Platform Level

OpenAI recently published their thinking on harness engineering, the discipline of designing environments, constraints, and feedback loops that make AI agents reliable at scale. The core takeaway is that the agent query loop is solved and the job of an engineer is to build the environment to make the agent effective.

Most harness engineering today happens at the repository level: AGENTS.md files, linters, structured docs, CI checks. That's necessary but insufficient for holistic change to an application. When your agent needs to migrate infrastructure, debug a live ETL pipeline, and test a frontend against real services, repository-level context isn't enough. The agent needs to be in the environment. The CLAUDE.md sitting inside of every Chicklet becomes a map of the cluster. This gave us a harness at the platform level:

- Context engineering happens through the Chicklet's direct access to running services and telemetry not just static docs

- Architectural constraints are enforced by the isolation boundary itself, the agent operates within a well-defined compute environment with dedicated resources

- Feedback loops are immediate. The agent can deploy a test environment, snapshot the database and services, test end to end, observe the result, and raise a PR on a snapshot of runtime state.

What We Were Able to Ship

This wasn't a demo. The agent performed three distinct workstreams against a production application for a real customer engagement:

Infrastructure migration. Moved the application off Vercel and GCP onto the Nightshift cluster. The agent provisioned and configured the backend services, handled the networking, and verified the migration end-to-end.

Critical bug fix. During this process we discovered data inconsistencies which Claude fixed by recreating the bug in an isolated environment on a subset of data. It had access to the data flowing through the pipeline, could trace the issue through logs and telemetry, and raised a PR. All from within the same environment.

Feature development. We shipped an interactive chat feature, including frontend work on the NextJS app and the three supporting backend services. Used Playwright and Chrome DevTools for testing and debugging, iterating against the live application.

It's so much more than a Sandbox

The industry is converging on a design pattern: agents need rich, constrained environments to do reliable work. Repository-level harnesses get you part of the way there. But for infrastructure work, migrations, debugging live systems, and shipping full-stack features, the harness needs to extend to the platform.

Nightshift is an open-source platform that gives agents isolated, durable, fully-equipped Linux environments where they can operate with the full context of a running system.

We're building Nightshift in the open because we believe this infrastructure should be community-driven. The workloads are changing fast. The platforms that run them need to keep up.

Check out the project at github.com/nightshiftco/nightshift and the docs at docs.nightshift.sh.

Check out the Nightshift project!

We're trying to standardize how AI Agents run on-prem or in the cloud.

View on GitHubNightshift is open source under Apache 2.0. Built in Pittsburgh & Miami.